Program Overview

MCC’s $474 million Indonesia Compact (2013–2018) funded the $73.2 million Procurement Modernization Project to improve procurement efficiency and ensure that procurement quality serves the public interest. The project aimed to achieve significant government expenditure savings with no loss of procured goods and services. Implemented in two phases, the project included activities to build a career path for procurement civil servants, create the roles and structures that provide procurement professionals with the authority to implement good practice, and strengthen controls such as procurement and financial audits to improve institutional performance.

Evaluator Description

MCC commissioned Abt Associates to conduct an independent final performance and impact evaluation of the Procurement Modernization Project. Full report results and learning: https://data.mcc.gov/evaluations/index.php/catalog/188.

Key Findings

Organizational Change in Procurement Systems

- The project boosted the Procurement Service Unit (PSU) staff trust and collaboration. However, PSU staff perceptions of corruption did not decline.

- The project created more permanent legal entities for PSUs, but there was no evidence of improved PSU adoption of procurement policies and systems.

- The project improved staff procurement knowledge marginally.

- There was no evidence of impact on staffing, possibly because of staff perceptions of limited advancement opportunities, low pay, and high workloads.

Final Procurement Outcomes

- There was no evidence that staff satisfaction with the quality of procurement outcomes changed.

- Based on tender-level data from the Procurement Management Information System (PMIS), the time taken to procure goods and services did not change.

- There was no evidence of reduced cost savings as measured by the difference in the budget ceiling amount and the value of the winning bid.

Evaluation Questions

The final performance and impact evaluation answered questions on organizational change and procurement outcomes. Some of these questions were:

- 1

Did the program result in a change in culture or shared values, systems, skills, and staffing? - 2

What types of organizational or operational changes are taking place at the PSU level? - 3

What types of procedural changes are taking place in the execution of procurements? - 4

Have trainee procurement knowledge and skills improved? - 5

Are there detectable improvements in budget execution and efficiency of procurement execution in the PSUs and associated spending units?

Detailed Findings

Organizational Change in Procurement Systems

These findings build upon the interim evaluation report results published in 2019.

The evaluation found no evidence that PSU procurement staff perceptions of corruption declined. However, qualitative data indicate greater trust and collaboration in PSUs. The project also increased PSU permanence. The evaluation found no evidence that the project’s PSU-level activities led to greater adoption of new procurement tools and systems introduced by the project. This outcome may be explained by the nationwide policy changes that increased theses systems’ adoption even in non-treatment PSUs. The project marginally improved staff knowledge of procurement—quiz scores rose by slightly more than 1 point (out of a maximum score of 18).

Final Procurement Outcomes

Using tender-level data, the evaluation assessed the total time to procure goods and services and found no evidence of a reduction as a result of the project. The evaluation also found no impact on cost efficiency as measured by the tender-level difference in the budget ceiling for a procurement compared with the winning bid value. This evidence suggests that the additional effort expended by the project to reach specific PSUs has not led to results beyond the influence of the nationwide policy changes.

Economic Rate of Return

MCC considers a 10% economic rate of return (ERR) the threshold to proceed with the investment. Although the evaluator did not recalculate the ERR, they provided feedback on the validity of the ERR produced by MCC (13.3%) in light of the evaluation findings. MCC was not able to calculate an ex ante ERR due to an unclear program logic and difficulties in developing an economic model.

In 2018, MCC estimated a post-compact ERR of 13.3% for the project. In estimating this return, MCC considered benefit streams from improved value for money in procurement of construction and non-construction goods and services. It also considered the benefit from improved budget execution. To estimate the total stream of benefits, MCC assumed that all construction projects delivered a 1.4 percent value for money and applied that return to the total construction spending over time. MCC was not able to measure benefits from non-construction goods and services due to data difficulties. MCC also found budget execution expenditure 5.2 percent higher than planned. The impact evaluation did not find evidence to support these positive returns. The evaluation also did not find evidence that the project improved cost efficiency or budget execution, implying that the project likely did not have a positive ERR.

MCC Learning

MCC, the MCA’s project implementers, and MCC’s evaluators should remain aware of nationwide policy changes and trends during project design, implementation, and evaluation in order to enhance project outcomes and to ensure accurate results measurement.

MCC should respect and enforce its conditions precedent concerning project implementation. The project was to advance from Phase 1 to Phase 2 only if the program logic was clarified and the ERR was calculated. MCC decided to advance without achieving these milestones.

Evaluation questions should be linked to concepts that are clearly articulated in the program logic. Corruption was included as an evaluation question, though corruption is not explicitly cited in the program logic. This may have confused interpretation of results.

Policy and institutional reform projects should ensure that MCC has access to internal management information systems and administrative data. This access ensures that rigorous evaluations are possible. The independent evaluator was able to conduct an impact evaluation because the evaluator consistently had access to the necessary data.

Evaluation Methods

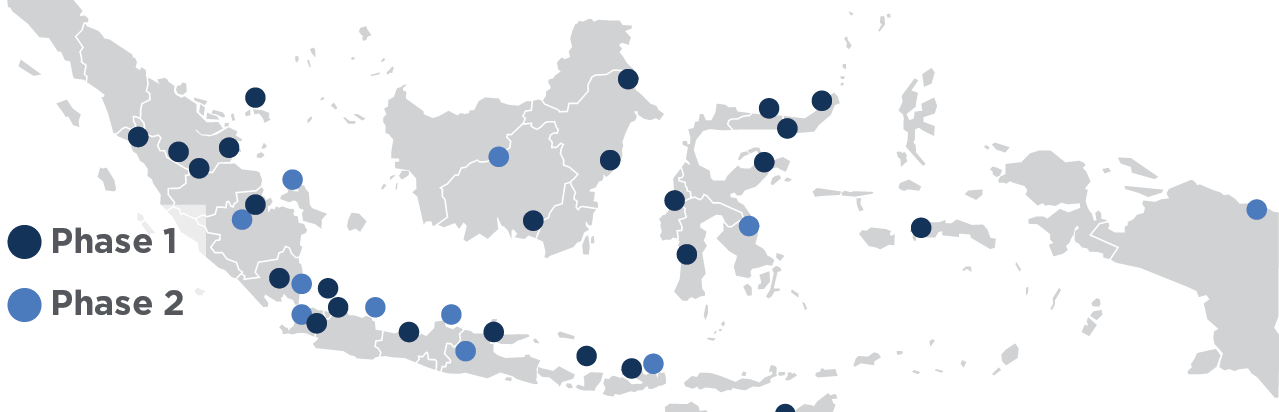

Implementation locations and phases

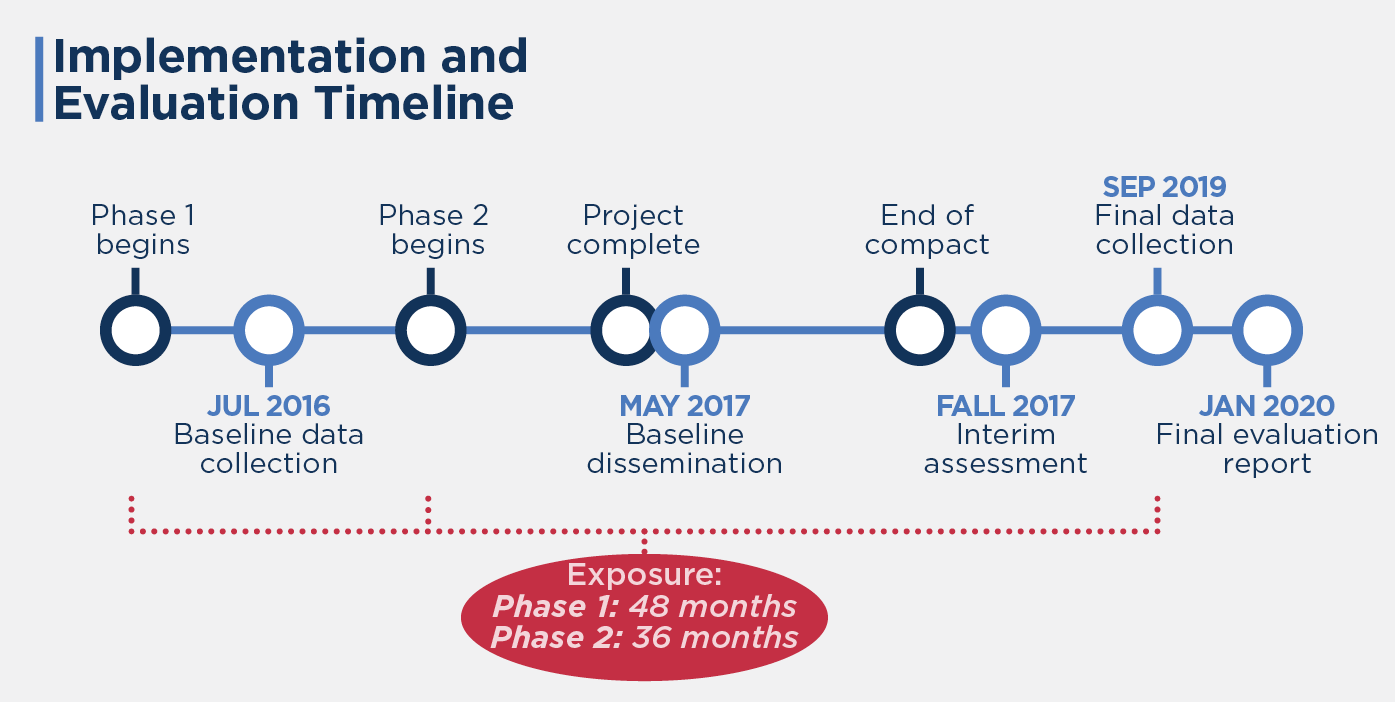

The final evaluation included an impact evaluation with a quasi-experimental design using weighted difference-in-differences and a mixed-methods performance evaluation to measure the project’s impact on key procurement outcomes. MCC divided the project into two phases due to the uncertainty around the program logic and economic model during the project design phase. The Phase 1 PSUs were supposed to be evaluated before a second phase was approved and implemented. Baseline data were collected in July and August 2016, and final data were collected in August and September 2019, more than one year after the project was completed in April 2018. The exposure period was approximately 48 months for Phase 1 and 36 months for Phase 2.

The qualitative approach involved semi-structured interviews conducted in August–September 2019 with 164 employees from Phase 1 and Phase 2 PSUs as well as spending units, or the departments on whose behalf the PSUs conduct the procurement. The interview data were synthesized to answer questions that assessed changes along five components of the organizational change 5-S Framework (5-S): shared values, structure, systems, skills, and staffing.

The quantitative impact evaluation employed two distinct quantitative analyses—structured staff surveys and procurement system tender data—from a treatment group composed of 12 PSUs and a comparison group. There were 10 PSUs at baseline and 13 at endline. The treatment group comprised PSUs selected for Phase 2 of implementation and the comparison group comprised PSUs shortlisted but not selected for Phase 2. To account for any initial differences between the treatment and comparison groups, data on baseline characteristics were used to assign analysis weights to PSUs in the comparison group. The analysis weights used predicted probabilities of selection for the project calculated by a logistic regression with a treatment dummy as the dependent variable regressed on PSU baseline characteristics that were closest to the factors that influenced their selection by the project. The results likely underestimate effects since the comparison group also received some treatment through nationwide activities. Nonetheless, the results reflect the impact of intensive PSU-level project activities, which accounted for the largest share of resources expended by the project.

The analysis of the structured surveys was a cross-sectional difference-in-differences regression. In total, there were 426 staff at baseline and 658 at endline. The outcomes included variables for staff perceptions of changes in 5-S using a Likert scale, as well as binary outcomes such as use of contracts and public-private partnerships. Analysts compared two points in time: baseline and endline. The analysis of the tender data used a comparative interrupted time series regression of 18,447 tenders from the PMIS between the end of 2014 and the end of September 2018. The analysis compared multiple points in time to focus on the outcomes of time efficiency and economic efficiency.

2020-002-2398